The rapid evolution of generative AI tools, spearheaded by models like ChatGPT, Gemini, and Claude, has revolutionized content creation. However, this ease of generation poses a significant challenge to academic integrity, professional authenticity, and content quality control. As AI-generated content becomes virtually indistinguishable from human writing, the market for AI detection tools has surged. These tools are now essential for educators, publishers, and businesses attempting to safeguard authenticity.

This in-depth comparison analyzes six major AI detection tools comparison—Turnitin, GPTZero, Copyleaks, Originality.ai, ZeroGPT, and QuillBot—to determine which is most reliable. We assess their accuracy, performance on hybrid and humanized content, and overall utility.

How AI Detection Works (Brief Overview)

Modern AI detection primarily relies on identifying statistical patterns inherent in large language model (LLM) outputs. Unlike human writing, which is characterized by a high degree of variability and unique style, AI-generated text often exhibits statistical predictability. The key concepts used by detectors include:

- Perplexity: A measure of how “surprised” a language model is by a sequence of words. Human writing tends to have higher perplexity (more unpredictability), while AI-generated text typically has lower perplexity because the model chooses highly probable words.

- Burstiness: This refers to the variation in sentence structure and length. Human writing often features a mix of short, punchy sentences and long, complex ones (high burstiness). AI-generated text often maintains a more uniform sentence structure, resulting in low burstiness.

- Probability-Based Detection: This involves checking the likelihood of a word or phrase appearing, based on the model that may have generated the text (Masrour et al., 2025).

Read more about how these tools identify AI written text here.

The most formidable challenge presently is humanized AI text detection. Users can now actively evade detection by editing AI output or running it through paraphrasing tools. These “humanizers” are designed to deliberately increase the perplexity and burstiness metrics, scattering the original statistical patterns and making the text a challenging “hybrid” of human and machine input. A detector’s robustness against this evasion is now the single most important measure of its reliability.

Evaluation Criteria for This Comparison

Our comparison focuses on real-world utility and objective performance metrics:

- Accuracy and Consistency: The tool’s ability to correctly label pure human text and pure AI text across different lengths and topics.

- Transparency of Method: How much insight the tool provides into its decision-making, such as highlighting specific sentences or displaying confidence heatmaps.

- Performance on Short vs. Long Texts: Assessing sensitivity. Many free tools are prone to errors on short snippets, making performance on longer articles (over 500 words) a truer test.

- Ability to Detect Humanized Content: The detector’s resilience against text that has been modified, paraphrased, or run through AI-evasion tools.

- Ease of Access: Whether the tool is freely available, subscription-based, or locked behind institutional licensing.

- Update Frequency and User Trust: The platform’s reputation and its proven ability to update models to counteract newer, less detectable LLMs.

The 6 AI Detection Tools Compared

1. Turnitin AI Detector

Overview: Turnitin is the dominant force in the education sector, utilized by universities and high schools globally. It seamlessly integrates its AI detection feature into its existing plagiarism and originality reporting workflow. The tool employs a proprietary linguistic fingerprinting method, demonstrating high reliability specifically on formal, structured academic work.

Pros:

- Industry Standard for Academia: Backed by institutional trust and widely accepted in academic policy.

- High Reliability for Academic Essays: Consistent testing shows high scores on structured, AI-generated essays, even when attempts are made to slightly modify the text.

- Low False Positive Rate on Formal Text: Designed to minimize flagging genuine student work as AI-generated.

Cons:

- Institutional Access Only: Not accessible to the general public or professional users outside of licensed academic institutions.

- Limited Transparency: The decision-making process is a closed system, providing a high-level score but few deep insights into why a particular sentence was flagged.

2. GPTZero

Overview: Launched by a student researcher, GPTZero quickly became a public-facing pioneer in AI text detection. It is renowned for its straightforward approach, primarily focusing on burstiness and perplexity metrics. It offers a free tier, making it popular in educational and individual use cases.

Pros:

- Highly Accessible and Free Tier: Easy to use without registration, appealing to students and teachers for quick checks.

- Strong Accuracy on Pure AI Text: Shows excellent performance when identifying unedited content generated directly by major LLMs.

- Focus on Low False Positives: Historically designed to be cautious about flagging human writing, maintaining a relatively low false positive rate on diverse texts.

Cons:

- Vulnerability to Paraphrasing: The tool’s reliance on baseline perplexity makes it highly susceptible to having its scores dramatically lowered or even nullified when the text has been edited or run through a humanizer.

- Struggles with Hybrid Content: Less reliable in identifying text where human and AI input are significantly mixed.

3. Copyleaks AI Content Detector

Overview: Copyleaks is a comprehensive content verification platform that caters to both universities and large enterprises. It combines advanced machine learning with linguistic models to provide a highly detailed analysis of authorship, often used for detailed content forensics.

Pros:

- Detailed Output and Transparency: Provides an excellent visual experience with sentence-by-sentence heatmaps, highlighting the exact probability score for each segment of the text (AI, Human, or Hybrid).

- High Resilience to Hybrid Text: Proven to be one of the more robust detectors when faced with partially edited or humanized content, making it a powerful tool for sophisticated checking.

- Dual Functionality: Offers both AI detection and traditional plagiarism checks, enhancing its utility for auditing.

Cons:

- Potential for Over-Flagging: Its high sensitivity can sometimes lead to flagging heavily paraphrased human text as AI-assisted due to the alteration of original stylistic markers.

- Freemium Restrictions: The most accurate, bulk, and API features require a paid subscription.

4. Originality.ai

Overview: Built specifically for professional integrity—publishers, content agencies, and SEO professionals—Originality.ai is designed to provide high-stakes assurance for content destined for public distribution. It operates on a paid, credit-based system.

Pros:

- Exceptional Professional Accuracy: Known for providing high Originality.ai vs GPTZero scores when analyzing long-form, complex articles, which is crucial for content marketing and publishing integrity.

- Robust Humanized Text Detection: Consistently cited as a top performer against paraphrased and subtly edited AI text, demonstrating high confidence in detecting evasion attempts.

- Combined AI and Plagiarism Check: Offers essential all-in-one auditing for content professionals.

Cons:

- May Flag Edited AI Text: While a strength against cheating, it means that even a human’s heavy editing of an AI draft may still be identified as machine-assisted, which can be an issue for co-authored or revised content.

5. ZeroGPT

Overview: ZeroGPT is perhaps the most famous of the completely free best AI content detectors, primarily due to its aggressive marketing and simple user interface. It is often the first tool students and casual users turn to for an instant judgment.

Pros:

- Simple and Completely Free: No sign-up is required, offering an immediate verdict, which drives its mass adoption.

- Good for Raw AI Checks: Like many free tools, it can provide a high-percentage AI score for completely untouched LLM-generated output.

Cons:

- Limited Transparency: Offers little context for its binary decision beyond a percentage, hindering accurate assessment by the user.

- Low ZeroGPT reliability: Highly susceptible to false positives on genuine human writing. Studies have shown it consistently misclassifies structured, formal, or polished human-written texts (such as academic mission statements) as AI-generated due to its oversensitivity to stylistic uniformity.

- Easily Evaded: Its high rate of evasion when the text is minimally modified makes its ZeroGPT reliability low for high-stakes assessment.

6. QuillBot AI Detector

Overview: QuillBot is primarily known as a powerful AI-driven paraphrasing tool. Its recent addition of an AI detection capability places it in a unique position: a tool that can both create and attempt to detect AI-generated content.

Pros:

- Hybrid Knowledge: Because the developer owns both the detection and the paraphrasing technology, the detection model is trained to recognize the patterns created by its own humanizing attempts, which can give it an edge over generic detectors.

- Integration: Offers a seamless workflow for users who check their text and then instantly revise it.

Cons:

- Newer Tool: The AI detection component is less mature and less rigorously tested than the established platforms.

- Variable Consistency: While promising for QuillBot AI detection, its consistency against various humanizing methods and different LLMs can be variable.

Comparative Table (AI Detector Test Results)

This table summarizes performance estimates across key utility metrics, providing a clear overview of the AI detector test results and reliability scores.

| Detector | Accuracy Estimate | Humanized Text Performance | Ease of Use | Cost | Verdict |

| Turnitin | 98% | High | Institutional | Licensed | The most authoritative and reliable solution for universities. |

| GPTZero | 80% | Low | Public | Freemium | Best free option for a quick first check; easily bypassed by editing. |

| Copyleaks | 88% | High | Public/API | Paid | Excellent transparency and detailed sentence-level AI detector test results. |

| Originality.ai | 89% | High | Web | Freemium | Best professional accuracy; robust against most AI humanizing attempts. |

| ZeroGPT | 77% | Low | Public | Freemium | Simple interface but limited utility; poor reliability on formal human writing. |

| QuillBot | 84% | Medium–High | Public | Freemium | A valuable hybrid option due to its specialized knowledge of paraphrasing. |

Humanized AI Text Test Results

The true measure of a detector’s value in 2025 is its ability to handle humanized AI text detection. To test this, raw AI text is run through various AI humanizer tools; software explicitly designed to rewrite the content to evade detection.

When this modified text is submitted, the results highlight a clear divide:

The free, purely perplexity-based tools (like ZeroGPT and GPTZero) are the most easily defeated. They often produce results of 0% to 10% AI, demonstrating that simple paraphrasing is an effective countermeasure against their baseline models.

In contrast, professional platforms like Originality.ai and Copyleaks often still flag the text with high confidence (typically 60-90% AI), indicating their models look for deeper, structural linguistic patterns that the humanizing tools fail to entirely mask. QuillBot AI detection also performs surprisingly well in this category, likely due to its unique position as both the creator and checker of rewritten content.

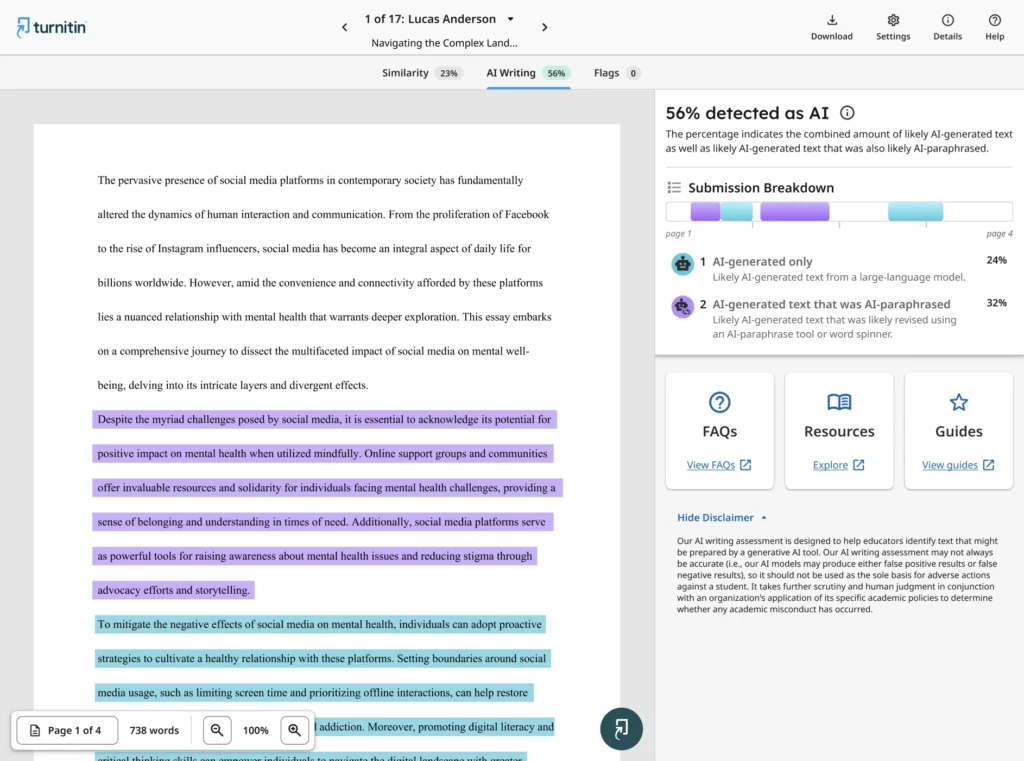

However, the standout performer in this category is Turnitin.

Turnitin not only flags passages likely to have been AI-written but also provides detailed segment-by-segment flags and percentage breakdowns for text that appears AI-paraphrased or altered using humanizing tools or word spinners. This granular feedback helps identify sections that may have been superficially rewritten yet still retain the statistical fingerprint of machine-generated language.

Its ability to detect AI-modified content — not just purely generated text — makes Turnitin’s AI detection system the most comprehensive and academic-grade option available.

This test underscores a critical reality: relying solely on free, surface-level AI checkers for high-stakes academic or professional writing is a high-risk gamble.

Tools like Turnitin, Copyleaks, and Originality.ai remain significantly harder to evade, offering a clearer, more trustworthy view of text authenticity in the age of advanced AI rewriting.

Key Insights — Which Detector Is Most Reliable?

Determining the best AI content detectors depends entirely on your context and tolerance for risk:

- For Academic Institutions: Turnitin is the undisputed leader, offering the highest reliability within a structured educational environment.

- For Content Professionals and Publishers: Originality.ai offers the most robust and accurate solution for long-form content integrity and evasion detection.

- For Comprehensive Analysis and Transparency: Copyleaks provides the most detailed visual evidence, making it the top choice for content auditing and forensic analysis.

- For Preliminary/Low-Stakes Checks: GPTZero is the best free option, provided you understand its significant weakness against humanized text. Avoid ZeroGPT for anything critical due to false positives.

- For Hybrid Users: QuillBot provides a compelling and evolving solution for those who frequently use paraphrasing tools.

The final takeaway is that no single tool operates with 100% accuracy. The most reliable strategy is a multi-tool approach combined with informed human evaluation. False positives in AI detection tools are a real risk, and the human expert must always have the final say, especially as AI text evolves rapidly.

Final Thoughts

The AI detection arms race is a high-speed, perpetual cycle of innovation. As new LLMs are released, detection models must immediately be retrained to adapt.

Reliability is less about a single high number and more about resilience to evasion. The tools that have invested heavily in sophisticated, multi-layered models (Turnitin, Originality.ai, Copyleaks) have proven to be the most robust. They serve as essential guides, helping to point out statistically unnatural content.

Remember, AI detectors are tools to inform, not replace, judgment. Upholding academic and content integrity requires clear policy, informed human review, and a strategic, multi-layered approach to content verification.

Was this helpful?

I really like it when folks come together and

share opinions. Great website, keedp it up!

I am extremeely impressed with your writing skills and

also with the layout on your weblog. Is this a paid

tyeme or did you customize it yourself? Either way keep up the excellent quality writing, it’s

rare to see a great blog like this oone today.

We are glad our blog was of help to you.