The widespread adoption of generative AI tools like ChatGPT, Gemini, and Claude has permanently reshaped the landscape of content creation and academic writing. With this technological revolution came the necessity of verification. Today, AI detection tools are essential for maintaining integrity in schools, universities, and professional publishing houses.

Yet, a new source of anxiety has emerged for honest writers and students: the false positive in AI detection.

A false positive occurs when a sophisticated AI checker, despite its best intentions, incorrectly flags original, human-written content as having been generated by a machine. This is an alarming experience. You wrote every word yourself, yet your essay, thesis, or report is returned with a concerning AI score.

This guide provides an explanation of why this happens, how to minimize the risk, and how to correctly interpret an official report like the Turnitin AI report to defend your authentic work.

What Are False Positives in AI Detection?

A false positive (FP) is a fundamental concept in testing and classification. In the context of AI detection tools, it specifically refers to:

False Positive (AI Detection): An outcome where a detector (like Turnitin, GPTZero, Copyleaks, or ZeroGPT) classifies a text as “AI-Generated” when, in reality, the entire text was produced solely by a human writer.

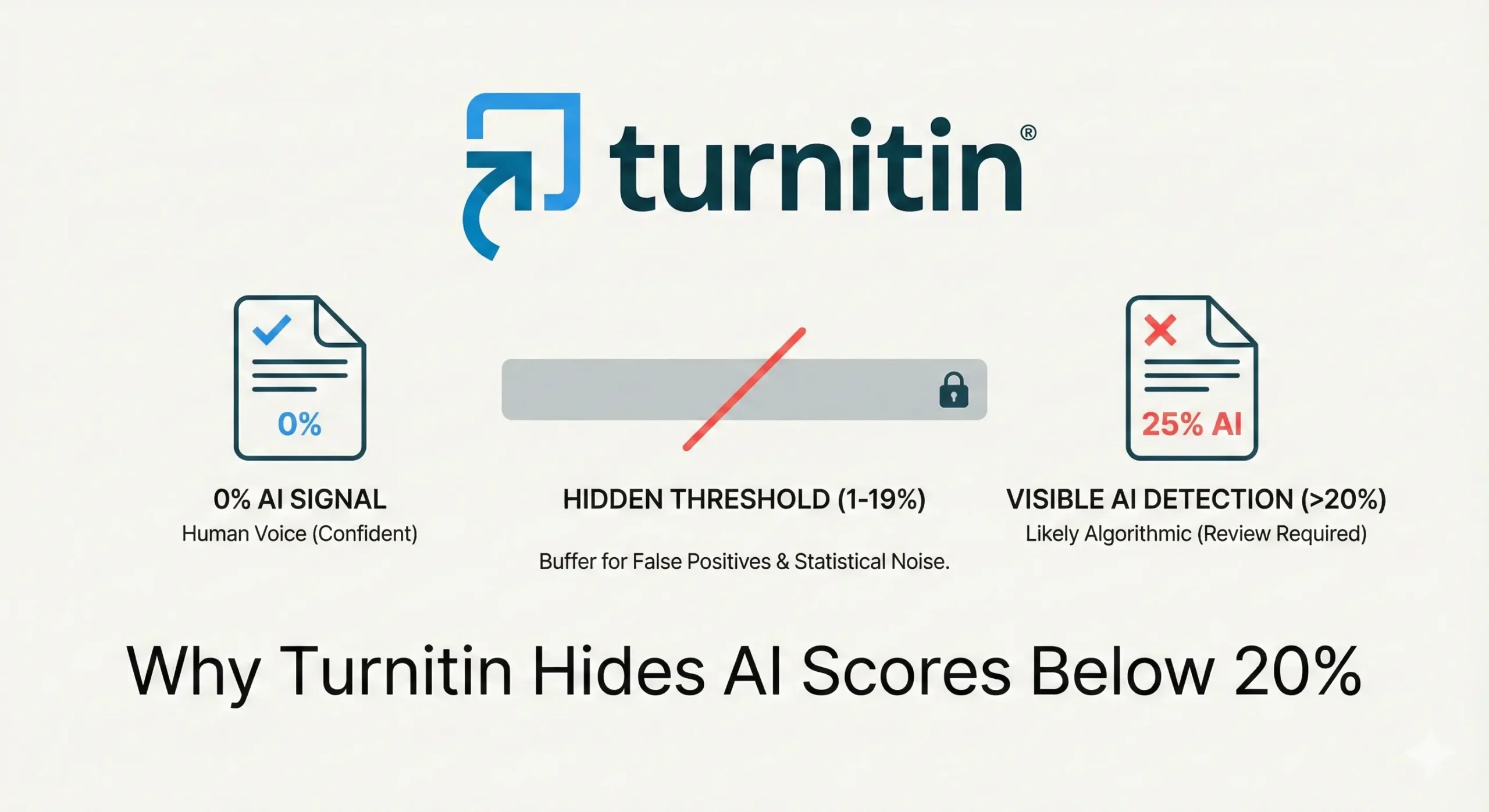

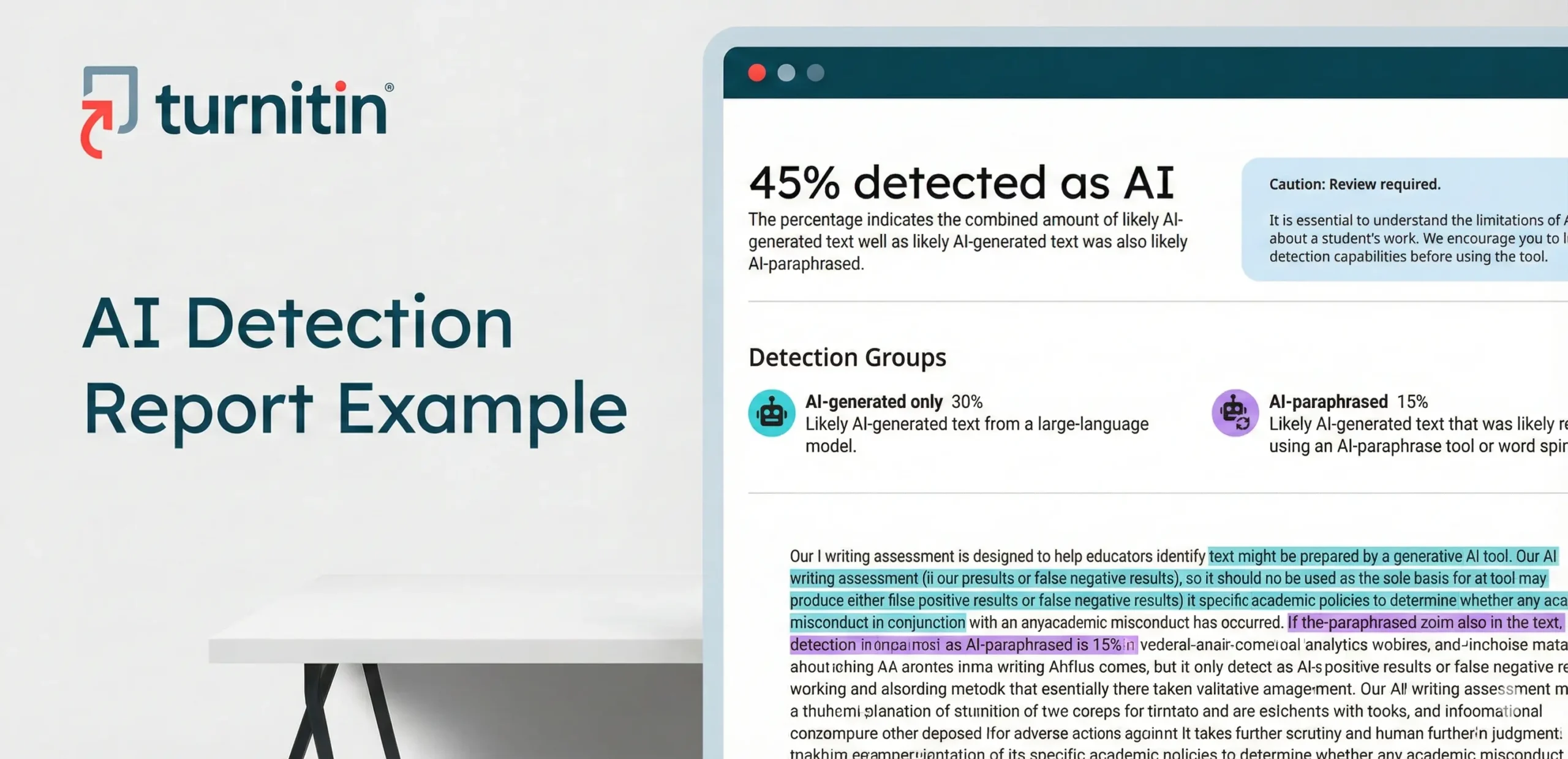

This is the opposite of a false negative, which is when a tool fails to detect content that was actually written by AI. While all major detection platforms, including Turnitin, strive for an extremely low false positive rate (Turnitin reports their document-level FP rate to be less than 1% for submissions with over 20% AI content), the sheer volume of writing submitted globally means that false accusations are an unfortunate reality for some authentic writers.

Understanding the underlying mechanics is the first step toward avoiding this pitfall.

Why Human Writing Gets Flagged: The Causes of AI Detector Error

AI detectors rely on analyzing patterns, predictability, and sentence flow. They look for signals that deviate from known human writing patterns. When human writing—especially academic or technical writing—unintentionally mimics these machine-like traits, a false positive AI detection can occur.

The primary causes relate to the core concepts of Perplexity (predictability) and Burstiness (sentence variation):

1. Predictable Sentence Structure and Phrasing

AI models, trained on trillions of words, often output text that is statistically “smooth,” meaning the next word is highly probable given the preceding words (low perplexity).

- Human Mimicry: A human writer who is extremely concise, uses short, simple sentences consistently, or relies on standardized, formal academic phrases (“In conclusion,” “It is therefore evident that,” “The core finding is…”) can accidentally create a stretch of text that is statistically too predictable, triggering the detector. This is often why text from non-native English speakers or those adhering strictly to a formal template might get flagged.

2. Overly Clean Grammar and High Coherence

AI tools are inherently clean writers; they eliminate the typical human imperfections like hesitations, run-on sentences, or slightly awkward phrasings.

- The “Perfect” Trap: If a human writer uses advanced editing tools (like Grammarly’s generative rewrite or paraphrase features, which themselves use LLMs) extensively to polish their work, the resulting text can become too coherent, too structurally sound, and too grammatically perfect, making it resemble the output of a machine.

3. Subject Matter and Tone Consistency

In fields like science, engineering, or business, the language is often technical, objective, and dense with jargon.

- Overly Formal Tone: This required consistent tone and low use of personal pronouns (I, we) or emotional language limits the burstiness and semantic variation. This formulaic approach, while required by the discipline, can be mistaken by the AI detector as a lack of human insight.

How Different Tools Approach AI Patterns

While all tools look for the low perplexity (predictability) and low burstiness (uniformity) of machine text, their application and confidence levels vary:

| Detector Tool | Focus of AI Detection | Risk of False Positive (General) | Notes on Inaccuracy |

| Turnitin | Statistical Markers of LLMs (GPT-3/4) & AI Paraphrasing. | Lowest (Targeted <1% FP for high-score documents) | Less reliable on scores 1-19%. Often flags generic intro/conclusion sentences. Can flag AI-assisted editing tools like Grammarly’s rephrase feature |

| GPTZero | Perplexity & Burstiness | Medium to High (Varies based on text complexity) | Tends to flag overly simple, formal, or minimalist writing styles as they lack variation. |

| Copyleaks | Frequency Ratios of AI-common phrases vs. human phrases. | Low | Can flag AI-assisted editing tools like Grammarly’s rephrase feature. |

| ZeroGPT | Statistical Pattern Matching (Black box) | Highest (Commonly reported by users) | Often cited by students as highly sensitive and inconsistent, frequently mis-flagging academic papers. |

How to Confirm If Your Flagged Text Is Really AI-Written

If your original work receives a high AI score, especially from a tool that is prone to ZeroGPT inaccuracy, it is crucial to remain calm and gather evidence. The detection score is a flag for inquiry, not a definitive verdict.

Here are steps to verify the authenticity:

- Compare with Drafts and Notes: Review your saved versions, outlines, and notes. The paper’s writing history (time spent typing, revisions made) is the most powerful evidence against an AI claim.

- Run Through Multiple Detectors (Cautiously): Use a second or third tool (like GPTZero or Copyleaks) to see if the flagging is consistent. Warning: Inconsistent results are common and often indicate an AI detector error rather than machine-written text.

- Request an Official Turnitin AI Report: Official institutional reports are widely regarded as the most reliable, as they are trained on academic text and designed to minimize the Turnitin AI false positive rate. PlagAiReport.com provides direct, official Turnitin AI reports without requiring a university login, giving you the best metric for verification.

- Review the Highlighted Text: Check the highlighted sentences in the report. Do they correspond to:

- Highly technical definitions?

- Generic conclusion statements?

- Areas where you used an AI-powered grammar/rephrase tool?

Tips to Reduce the Risk of False Positives

The best way to ensure your human vs AI text detection is accurate is to simply write like a confident, authentic human. You don’t need to “trick” the system; you just need to embrace the stylistic qualities that machines struggle to replicate.

- Vary Sentence Length and Rhythm: Deliberately mix short, punchy sentences with long, complex ones. This creates high “burstiness.” (Read: How to Write Human-Like Text That Passes AI Detection)

- Include Personal Examples or Opinions: Add personal pronouns (“I believe,” “In my experience,” “We must consider”). AI can’t replicate your lived experience.

- Embrace Small “Imperfections”: Use occasional interjections, qualifiers (“perhaps,” “it seems that”), or even slightly casual phrasing where appropriate. Don’t let AI-assisted editing strip your text of its natural, human voice.

- Write the Initial Draft Yourself: Avoid the temptation to use AI for the first draft. The act of drafting forces you to use your natural word choice and structure, which creates the high perplexity detectors look for in human-written work.

- Manually Rephrase: If you use an AI tool for paraphrasing or summarizing a source, close the AI output and rewrite the summary completely in your own words before using it in your paper.

The Importance of Turnitin AI Report Accuracy

The reliability of detection depends heavily on the model’s training and purpose. Free, public AI checkers often use simpler, generalized models. While they may be good at identifying obvious, raw AI output, they frequently lead to a GPTZero human text flagged scenario when the writing is formal, concise, or complex.

Turnitin’s AI model, integrated into thousands of learning management systems, is designed specifically for academic writing and benefits from continuous data refinement to maintain a very low false positive rate. This difference in accuracy is critical:

| Tool Category | False Positive Concern | Recommendation |

| Free/Public Tools (ZeroGPT, some GPTZero results) | High; often misclassifies professional or formal human writing. | Use only for informal, low-stakes checks. |

| Official Institutional Tools (Turnitin) | Low; highly trained to distinguish academic human writing from LLM text. | Essential for high-stakes submissions (theses, major papers). |

For writers or students who do not have direct access to Turnitin’s service, using PlagAiReport.com is the best way to get a verified, official Turnitin AI report to ensure maximum confidence in your work’s integrity.

What to Do If Your Human Text Is Flagged as AI

Being accused of using AI when you didn’t can be stressful, but you have the right to defend your work.

- Don’t Panic: Recognize that the tool is just a piece of software, and the score is probabilistic, not conclusive. False positives are a known limitation.

- Gather Evidence: Collect your drafts, source materials, and any documented process (like a writing journal or Google Docs history). This is proof of work.

- Explain Your Process: Approach your instructor or editor calmly. State clearly that the work is 100% human-written and explain the writing choices that might have triggered the flag (e.g., “I used a very formal, consistent structure because this is a scientific report”).

- Use Verified Reports: If the accusation stems from an unofficial checker, provide the results of an official, verified report to provide a more credible, accurate metric.

The conversation should always center on academic integrity and your demonstrated knowledge, not just on a percentage score.

The Future of AI Detection Accuracy

The industry is in a constant technological arms race. Turnitin, GPTZero, and Copyleaks all regularly update their models to improve recall (detecting AI) while simultaneously decreasing the false positives AI detection rate. Turnitin, for instance, has adjusted its models to reduce false positives in generic introductory and concluding sentences.

The trend is moving toward sophisticated models that analyze context, writing style evolution, and document metadata, not just statistical predictability. However, until AI detectors are flawless, the writer’s voice and the instructor’s judgment will always be the final, most reliable authority.

Conclusion

AI detection tools are valuable for maintaining academic and professional standards, but they are not infallible. The false positives AI detection issue is a reality caused by the technical limitations of pattern recognition.

As an authentic writer, your best defense is to:

- Be Authentic: Inject your unique voice and personality into your writing.

- Be Varied: Use diverse sentence structures and word choices.

- Be Prepared: Know how to interpret an official Turnitin AI report correctly, and use it to confidently verify your original work.

Was this helpful?